The visual data transformation platform that lets implementation teams deliver faster, without writing code.

Start mappingNewsletter

Get the latest updates on product features and implementation best practices.

The visual data transformation platform that lets implementation teams deliver faster, without writing code.

Start mappingNewsletter

Get the latest updates on product features and implementation best practices.

Most field mapping tools solve the easy 60% — exact column name matches. The other 40% — semantic variations, multi-field combinations, conditional business logic — still routes to a developer or ends up in a brittle spreadsheet.

The common approaches each break in predictable ways:

Is there a platform that can auto-suggest mappings from messy CSVs and APIs into our defined common data model?

DataFlowMapper was built for exactly that. Its AI mapping layer covers three areas:

Want to see DataFlowMapper's AI Copilot in action? Click here to watch our guide.

You receive a client's CSV file with 80 columns. Your target system expects a specific structure. Some names align directly, others are variations (CustID vs. Customer_ID), some need multiple source fields combined, and a few have no obvious match at all. Doing this by hand is slow, error-prone, and has to be done again from scratch the next time a similar source arrives.

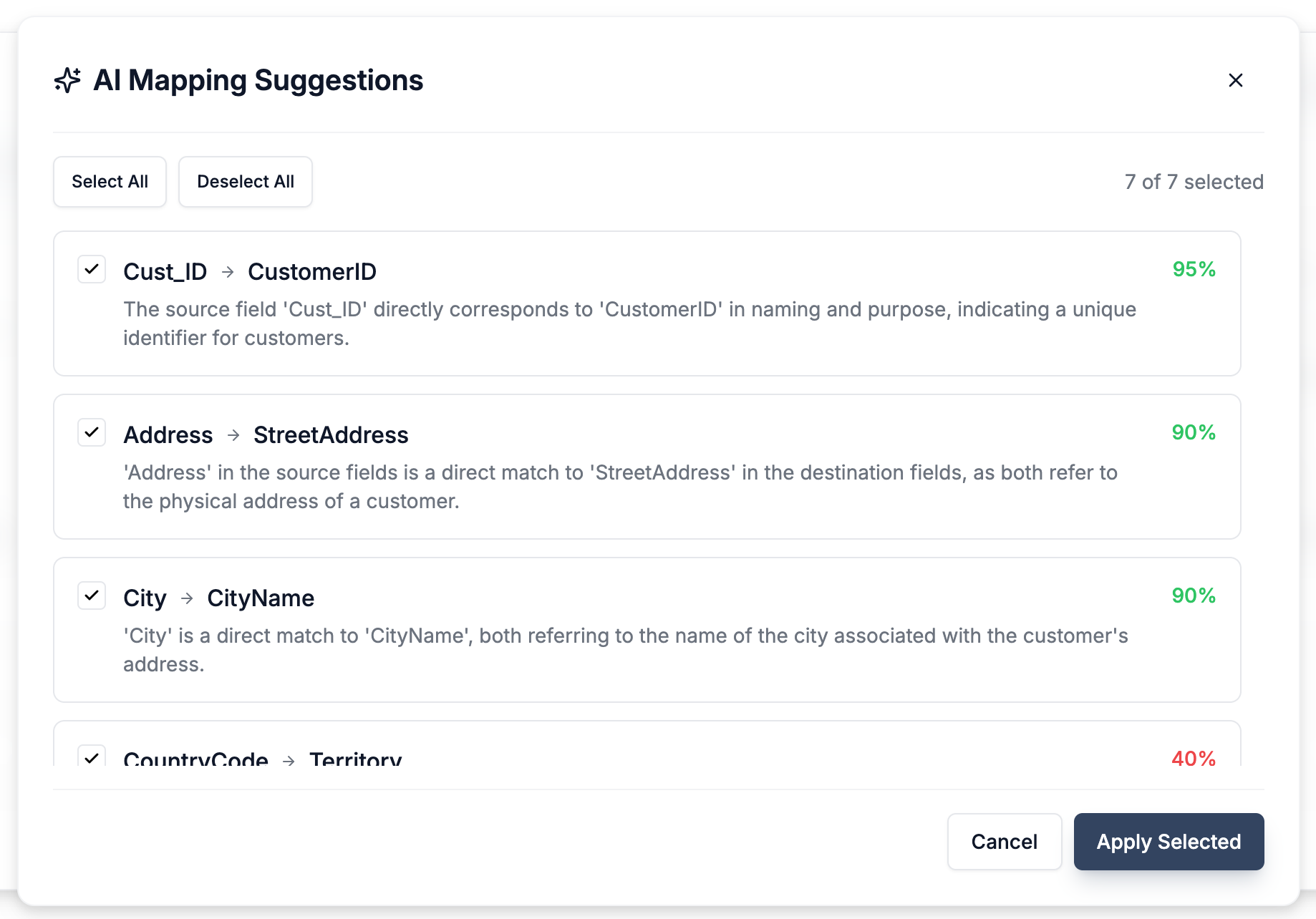

dob → Date of Birth: 97 — exact semantic matchref_no → transaction_id: 62 — likely match, review recommendedacct_num → account_number: 91 — strong abbreviation matchDataFlowMapper is built for exactly this — among the best AI importers that suggest column mappings automatically, it returns confidence scores alongside each proposed match so teams can bulk-accept the obvious connections and reserve judgment for the ambiguous ones. High-confidence matches are applied automatically. Low-confidence ones are flagged for human review rather than silently getting it wrong — which is the critical failure mode of rule-based approaches.

Benefit: Dramatically reduces time spent on obvious field matching, catches variations that exact-string approaches miss, and surfaces only the genuinely ambiguous mappings for human decision-making. This is the core of what it means to automate field mapping.

Fig: AI instantly suggests mappings between source and destination fields with confidence scores.

Fig: AI instantly suggests mappings between source and destination fields with confidence scores.

For situations where you want to configure the full mapping from a set of requirements rather than field-by-field, DataFlowMapper's Map All lets you describe what the transformation should do in plain English:

"Map standard fields like Name, Address, and City directly. Combine 'FirstName' and 'LastName' into 'ContactName'. Set 'AccountStatus' to 'Active' for all records. If 'Region' is 'North' or 'South', set 'Territory' to 'Americas', otherwise 'EMEA'."

The AI interprets these holistic instructions, performs the 1-to-1 direct mappings, and automatically configures the necessary custom logic for fields requiring conditional or concatenation rules. The result is a near-complete mapping file in seconds — a starting point for refinement rather than a blank canvas.

This is especially useful on initial setup with wide or complex files where requirements are already documented and you just need them turned into a functional mapping configuration.

Direct field mapping handles the obvious connections. But most real-world imports require logic: "If 'TransactionType' is 'REFUND' and 'DaysSincePurchase' < 60, calculate 'RestockFee' as 'Amount' × 0.15, otherwise 0." Or validation rules like "PostalCode must match the format for the given Country."

Building this reliably in Excel is brittle. Scripting it requires developer time and creates a maintenance burden when the logic needs to change. The question is how to generate ETL mapping and transformation logic without writing code from scratch every time.

DataFlowMapper's visual Logic Builder lets you construct transformation and validation rules with a no-code interface. But when the rule is clear in your head, you shouldn't have to drag-and-drop every block manually.

The resulting logic — whether AI-generated or manually built — is saved as part of the mapping template. Repeatability is the point: when the next client from the same legacy system arrives, the logic runs again without rebuilding it. That's the difference between a three-day task and a three-hour one.

Benefit: Sophisticated transformation rules and validation become accessible to implementation specialists and analysts, not just developers. It directly addresses the ETL mapping bottleneck: how do you encode complex business rules in a maintainable, reviewable form without writing a script that only one person can understand?

For teams building their own import tools, automating ingestion pipelines, or managing schema drift across many source systems, the mapping intelligence itself — the ability to take a source schema and propose connections to a target schema — needs to be callable via API, not locked inside a UI workflow.

This is the use case for DataFlowMapper's Mapping Suggestions API.

Endpoint: POST /ai/mapping-suggestions · Full API reference: api.dataflowmapper.com/docs

What you send:

sourceFields — array of column headers from your source file or schemadestinationFields — array of target schema fields you want to map tosampleData — 50–500 rows of raw data (recommended: 500 rows for accurate PII detection and better semantic analysis)What you get back:

"dob" correctly resolves to "Date of Birth", "cust_id" resolves to "customer_identifier", variations in naming convention are handled automaticallyLimits: 150 destination fields per request by default; enterprise tiers support custom limits for larger schemas.

Optional headers for observability:

x-user-id — end-user ID or email for per-user loggingx-entity-id — client-defined entity ID (e.g., Customer_123) for reportingIdempotency-Key — UUID to ensure retry safety; same key returns the cached resultThe Mapping Suggestions API is designed for several concrete patterns:

Automated importer column matching: A SaaS product that accepts client file uploads can call the API on upload, auto-select the most likely destination mappings, and surface only the ambiguous fields for user confirmation — instead of requiring users to map every column manually.

Common data model normalization: Teams ingesting data from many different source systems into a single canonical schema can use the API to generate mapping proposals for each new source, review confidence scores to catch edge cases, and build a mapping library over time.

Schema drift detection: When a source file format changes — a renamed column, a new field, a different date format — the API can compare the new source headers against a known target schema and surface what no longer maps cleanly, rather than silently breaking the transform.

Programmatic onboarding pipelines: Implementation teams building repeatable onboarding scripts can call the API as part of the ingestion workflow: new client file arrives, mapping proposals generated, high-confidence matches applied automatically, low-confidence matches routed for human review.

This is the same intelligence that powers the in-product AI suggestions — exposed as a utility API so it can be composed into whatever workflow or application your team is building.

For teams that need the full import workflow — file upload, template matching, transformation, validation, and governed delivery to your S3 bucket — rather than just the mapping intelligence layer, see DataFlowMapper's Embedded Import Portal.

For organizations running AI-powered data migration at scale, onboarding dozens of clients or executing large platform migrations, the next step beyond AI mapping suggestions is fully autonomous agents. See how AI Agents automate end-to-end customer onboarding →

Most automated data mapping tools work at the column-header level: they match FirstName to first_name using string similarity and call it done. That handles the easy 60%. The other 40% — semantic variations, multi-field combinations, conditional logic, business rules — still routes to a developer or gets handled with brittle spreadsheet formulas.

DataFlowMapper addresses the full stack:

The result for implementation teams: a data transformation workflow where AI handles the obvious work, flags the ambiguous, and gives analysts the tools to encode the rest — without writing a new script for every source file.

DataFlowMapper's AI capabilities use large language models and machine learning trained on data transformation and schema mapping patterns. The system understands common field naming conventions across industries, recognizes PII patterns in sample data (masking before AI processing), and generates logic that maps directly to DataFlowMapper's transformation engine — not generic code that requires adaptation.

For the Mapping Suggestions API, the sample data is used for contextual analysis and PII detection, then the top representative rows are passed to the AI for mapping inference. The more sample data you provide, the more accurately the model can distinguish fields that look similar on name alone but differ in content (e.g., two date columns that map to different destination fields based on their values).

Implementing AI data mapping tools into your workflow delivers:

Stop letting manual field matching slow down implementation projects. The automated data mapping tools that actually work for complex, recurring imports are the ones that combine AI-assisted field matching, logic generation, and reusable templates — not just a smarter column-matching widget.

For insurance teams applying this to recurring file processing, see how AI-assisted mapping fits into a full bordereaux workflow →.

Yes. DataFlowMapper's AI mapping engine accepts source column headers, sample data, and your target schema, then returns confidence-scored field mapping proposals with human-readable explanations for each match. It works across CSV, Excel, JSON, PDF, Word documents, and API sources. The same intelligence is available programmatically via the Mapping Suggestions API for teams building their own importers.

AI-suggested mappings (auto-matched with confidence scores you review and approve), Map All (plain-English instructions that configure an entire file's mappings at once), and AI Logic Assist (generates transformation logic from plain English) together eliminate the majority of manual mapping and logic-building work. For programmatic use cases, the Mapping Suggestions API provides the same intelligence via REST.

Automated data mapping uses AI to analyze source and destination schemas, then propose or generate the connections between fields — replacing manual column-by-column work. DataFlowMapper extends this to include automated logic generation: not just which fields connect, but what transformation rules and conditions apply to each one.

AI mapping tools use semantic understanding to match fields by meaning rather than exact string. A field called 'dob' maps correctly to 'Date of Birth' even without an exact name match. Confidence scoring surfaces low-certainty suggestions for human review rather than silently making wrong decisions — which is the core failure mode of purely rule-based approaches.

Yes. DataFlowMapper's Mapping Suggestions API accepts source fields, destination fields, and sample data (50–500 rows recommended), and returns per-field mapping proposals with confidence scores and explanations. It supports up to 150 destination fields per request by default, with enterprise tiers available for larger schemas.

Traditional ETL mapping requires manually specifying every field relationship in configuration files or code. AI data mapping infers those relationships from schema analysis and sample data, then generates the mapping specification automatically. DataFlowMapper combines both: AI generates the initial mapping, and the visual spreadsheet-style editor lets you review, adjust, and save reusable templates — so the next similar source file takes minutes, not days.