AI workbench for client data onboarding. Built for implementation teams at vertical SaaS.

Book WalkthroughNewsletter

Get the latest updates on product features and implementation best practices.

AI workbench for client data onboarding. Built for implementation teams at vertical SaaS.

Book WalkthroughNewsletter

Get the latest updates on product features and implementation best practices.

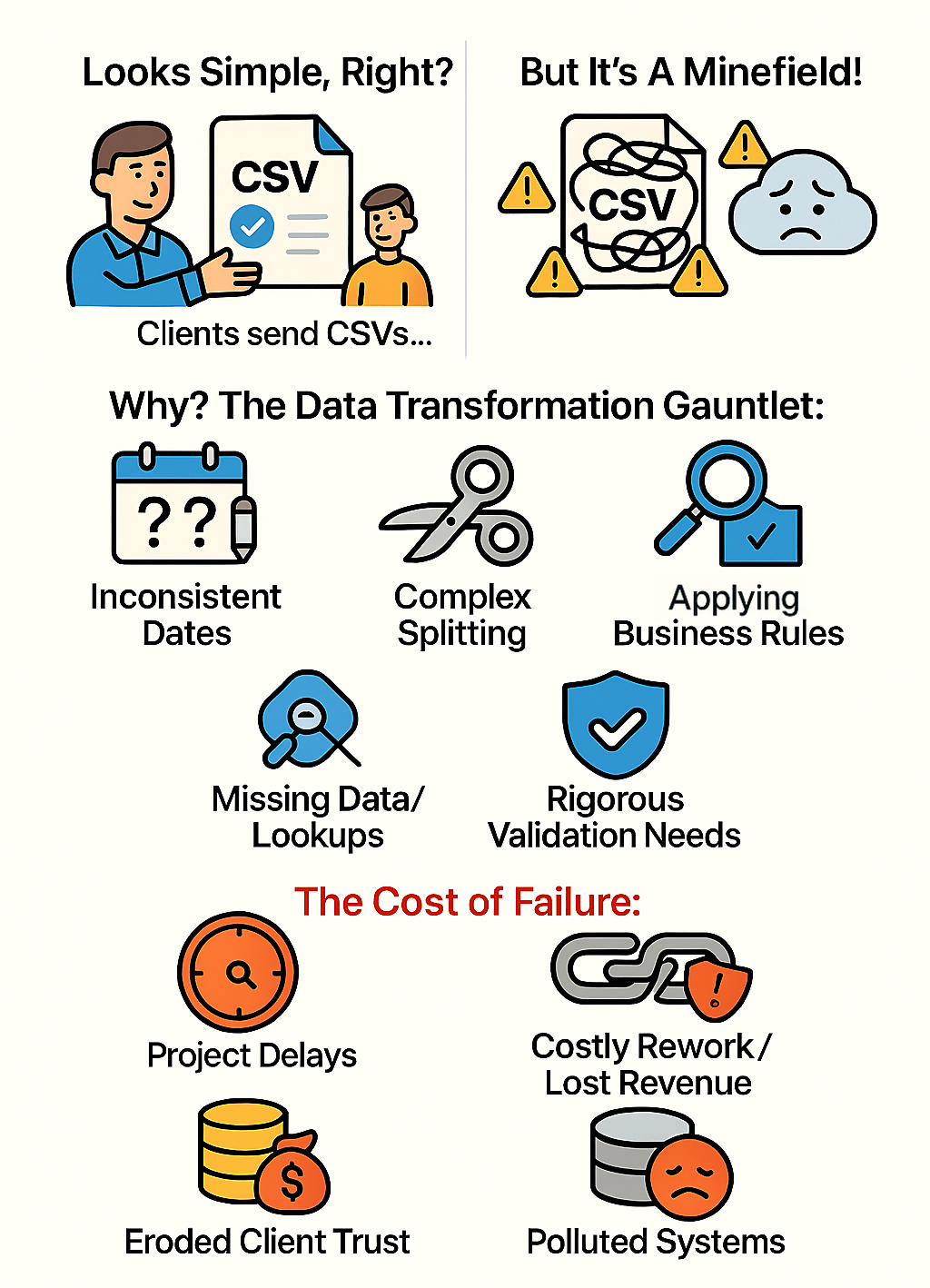

Implementation teams, data migration specialists, and data onboarding professionals face a constant challenge: client data is never system-ready. Tasked with loading external data into systems like Salesforce, NetSuite, Workday, or custom applications, the bottleneck isn't parsing the file structure. It's standardizing the chaos within it.

Failed or inaccurate data onboarding directly impacts project timelines and client satisfaction. Relying on basic csv upload tools or manual Excel work is insufficient for enterprise requirements.

This guide provides a technical deep dive into CSV data transformation. We illustrate common csv data cleaning steps and csv data validation techniques using a concrete scenario: transforming a messy contact list CSV into a standardized format ready for CRM import.

We compare three approaches: traditional spreadsheets (Excel), scripting (Python/SQL), and specialized Data Onboarding Infrastructure like DataFlowMapper.

Imagine receiving 'source_contacts.csv' from a new client. It contains typical inconsistencies: mixed date formats, combined names, and missing region data.

Try DataFlowMapper free in your browser and follow along. Click here to signup

Download the example files: source_contacts.csv | destination_template.csv

Source Data ('source_contacts.csv'):

Full Name,email address,company,"Registered Date",Notes,ZIP

"Alice Wonderland","alice@example.com ","Wonderland Inc.","10/05/2024","Met at conference, interested in Product A",01234

Bob The Builder,bob@construct.co,,"5-May-24","Needs follow-up",98765

"Charlie Chaplin","charlie@silentfilms.net","Chaplin Studios","20240515","VIP",NULL

Diana Prince,diana@themyscira.org,Amazon Corp,"May 20th, 2024",, 54321

"Edward Scissorhands","edward@cuttingedge.com ","","2024-05-22","Referral",N/A

Fiona Shrek,"fiona@swamp.org","Far Far Away LLC","2024-05-23","Existing Customer",12345

"George Jetson","george@spacely.com","Spacely Space Sprockets, Inc.","6/1/2024","Inquired via website",90210

"Harry Potter","harry@hogwarts.edu","Ministry of Magic","20240602","Needs demo",00001

"Indiana Jones","indy@archaeology.org","Marshall College","June 5th, 2024","Sent artifacts",67890

"James Bond","007@mi6.gov.uk ","MI6","2024-06-07","Top Secret",SW1A 2AH

Target Requirements:

Client data often treats ZIP codes as numbers (dropping leading zeros) and dates as free text.

Excel:

=TEXT(

IF(ISNUMBER(D2), DATE(LEFT(D2,4),MID(D2,5,2),RIGHT(D2,2)), /* Handles YYYYMMDD */

IF(ISNUMBER(DATEVALUE(D2)), DATEVALUE(D2), /* Handles recognizable formats */

IFERROR(DATEVALUE(SUBSTITUTE(LEFT(D2,FIND(" ",D2)-1),"th","")), /* Handles 'Dayth' */

"INVALID_DATE"))),

"YYYY-MM-DD")

Python (Pandas):

import pandas as pd

# Explicitly read ZIP as string to preserve leading zeros

df = pd.read_csv('source_contacts.csv', dtype={'ZIP': str})

# Attempt flexible date parsing, coercing errors to NaT

df['RegistrationDate_dt'] = pd.to_datetime(df['Registered Date'], errors='coerce', infer_datetime_format=True)

df['RegistrationDate'] = df['RegistrationDate_dt'].dt.strftime('%Y-%m-%d')

DataFlowMapper Advantage:

TO_DATE intelligently parses common date formats without complex logic.

TO_DATE(Registered Date)FORMAT_DATE(result, "YYYY-MM-DD")

The CRM requires a 'LeadSource'. If 'Notes' contains "conference", set to 'Conference'; otherwise 'Event'.

Excel:

IF + ISNUMBER(SEARCH(...)). Nesting multiple conditions makes formulas unreadable.=IF(ISNUMBER(SEARCH("conference", E2)), "Conference", "Event")

SQL:

CASE WHEN statements. Readable for simple cases, but logic is buried in queries.SELECT CASE

WHEN LOWER(Notes) LIKE '%conference%' THEN 'Conference'

ELSE 'Event'

END AS LeadSource

DataFlowMapper Advantage:

The target CRM needs a 'Region' derived from 'ZIP' using an external database or API.

Excel:

VLOOKUP within the workbook. Cannot query external APIs or databases directly. Requires manual data staging.Python:

def get_region_from_api(zip_code):

try:

response = requests.get(f"https://api.example.com/zip/{zip_code}", headers=auth)

return response.json().get('region')

except requests.exceptions.RequestException:

return 'API Error'

DataFlowMapper Advantage:

region = remotelookup(source="ZipInfoAPISource", parameters={"zipParam": row["CleanedZIP"]}, return_field="RegionName")

Splitting 'Full Name' into 'FirstName' and 'LastName' requires handling variations like titles and suffixes.

Excel:

DataFlowMapper Advantage (Hybrid):

re (regex) directly in the mapping.

# Python code within DataFlowMapper's Logic Builder

import re

name = str(row['Full Name'] or '')

# Remove common titles

clean_name = re.sub(r'^\s*(Mr\.|Ms\.|Dr\.)\s+', '', name, flags=re.IGNORECASE).strip()

parts = clean_name.split()

result = parts[0] if parts else ''

Validating data before import is the only way to prevent database pollution.

Excel:

Python:

DataFlowMapper Advantage:

VALUECOUNT(row['Email']) > 1 flags duplicates in the current file.remotelookup checks if the email already exists in the target CRM.

| Approach | Pros | Why it fails for Implementation Teams |

|---|---|---|

| Excel | Familiar interface | High corruption risk. Silent errors (dropped zeros), no audit trail, manual & non-repeatable. |

| SQL | Powerful set-based ops | Infrastructure heavy. Requires staging tables; difficult to handle API lookups and file I/O. |

| Python | Unlimited flexibility | Technical Debt. Creates maintenance overhead; requires developer resources for every new client template. |

| DataFlowMapper | Purpose-Built Infrastructure | Combines visual speed with code power (Python/Regex). Native validation and enrichment. Repeatable. |

For implementation teams, data transformation is not a one-off task; it is a repeatable process that demands reliability. While Python offers power and Excel offers accessibility, neither is designed as collaborative infrastructure for client data onboarding.

DataFlowMapper bridges this gap. It provides the no-code speed for standard mappings and the low-code power for complex business rules. By standardizing on a platform, implementation teams can stop debugging scripts and start delivering faster migrations.

Standardize your implementation workflow. Build your first reusable mapping in the Logic Builder today.

While Python scripts are powerful, they create technical debt. Infrastructure provides a standardized, visual environment that allows non-engineers to build and validate logic, reducing the dependency on individual developers for every migration project.

Specialized data onboarding platforms like DataFlowMapper use visual logic builders. These allow implementation teams to define cleaning rules (like date standardization or email validation) once and apply them automatically to every new client file.

The most robust method is using a dedicated validation engine that runs post-transformation but pre-import. This ensures data is checked against business rules (e.g., regex for emails, unique constraints) and provides specific error reports before it ever touches your destination system.